For the last few years, the public face of AI has been the prompt.

Ask a question, and the model responds. Refine the instruction, and the answer improves. It can write, summarize, reason, generate code, explain ideas, and simulate expertise with remarkable fluency. That experience has been powerful enough to reshape expectations of what software can do.

And yet, beneath that progress, there has been a fundamental limitation: most AI systems have been brilliant in the moment, but weak across time.

They could reason inside the current interaction, but they did not truly carry forward what mattered. They did not reliably retain the significance of prior outcomes, the preferences of a user, the context of an evolving workflow, or the lessons of repeated interactions in a disciplined and usable way. In effect, they were intelligent, but largely stateless.

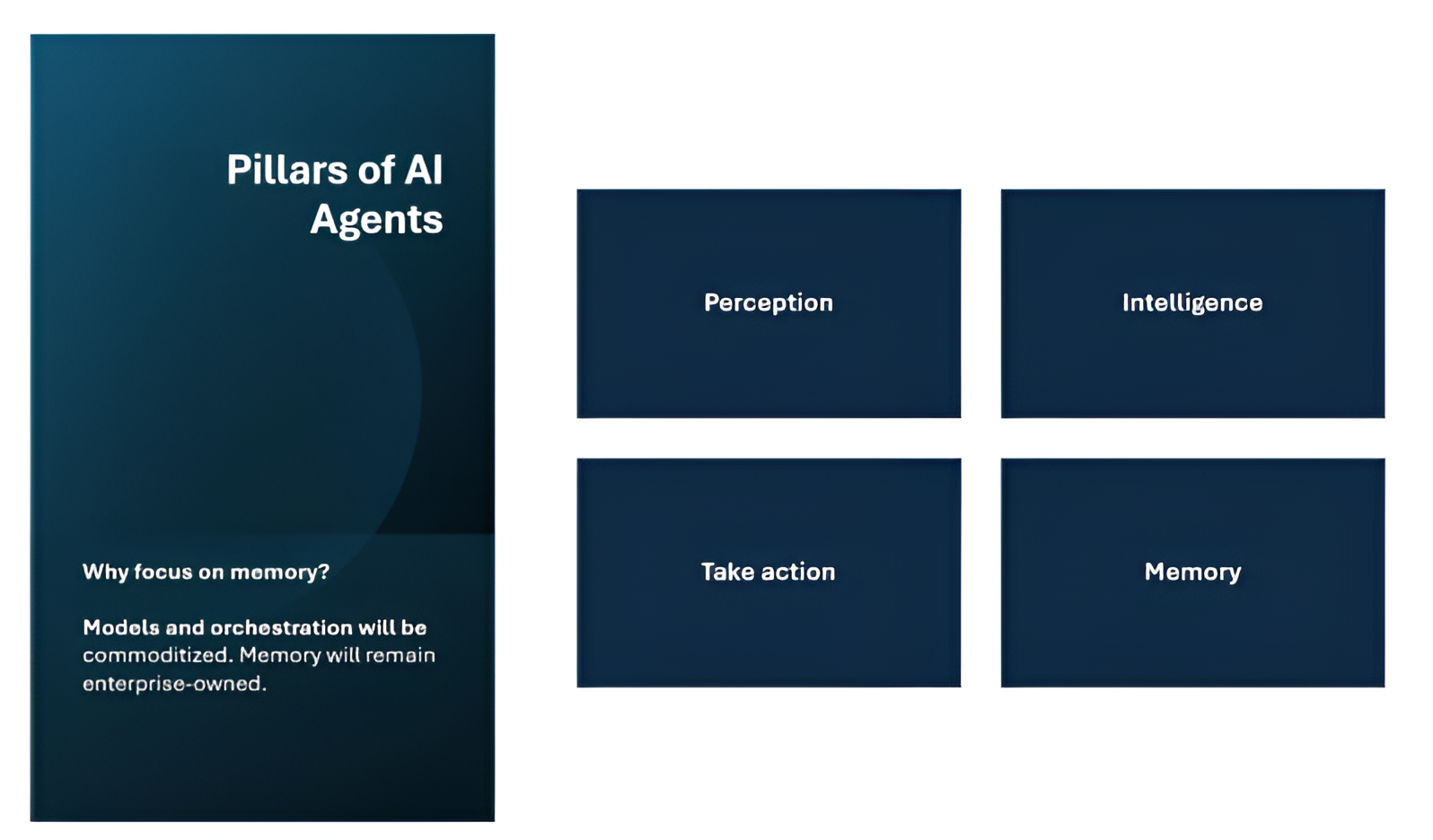

That is why the rise of AI agents matters so much.

An agent is not simply a model that produces language. It is a system that can pursue an objective. It can interpret context, reason through alternatives, use tools, take action, observe results, and increasingly, adapt its future behavior. The moment a system starts doing that, memory ceases to be an optional enhancement. It becomes central to the architecture.

In many ways, this is the real shift now underway. We are moving from systems that can respond intelligently to systems that can accumulate usefulness. And the layer that makes that possible is memory

Looking for a trusted Generative AI services company to build scalable AI solutions for your business?

Table of Contents

- Why Memory Changes the Meaning of Intelligence

- What Agent Memory Really Is

- The Five Forms of Memory That Matter

- Why Agent Memory Will Be Different From Human Memory

- Memory Is Not a Store. It Is a Lifecycle

- The Hard Problems in Agent Memory

- How the Memory Problem Should Be Solved

- The Role of the Tooling Stack

- How Memory and Enterprise Data Come Together

- The Future of Agent Memory

- Closing Thought

Why Memory Changes the Meaning of Intelligence

The most important thing memory adds to an agent is not storage. It is continuity.

Without memory, an AI system may still be impressive. It may answer brilliantly, plan competently, and even execute a task well. But each interaction remains too isolated. The system does not meaningfully build on what came before. It does not carry experience forward in a reliable way.

Memory changes that model. It allows the agent to connect the present with the past and use that connection to improve future action. It can remember what worked, what failed, what a user prefers, what stage a task is in, which route was already tried, which rule applies, and which outcome should influence the next decision. Memory turns intelligence from a one-time event into a cumulative process.

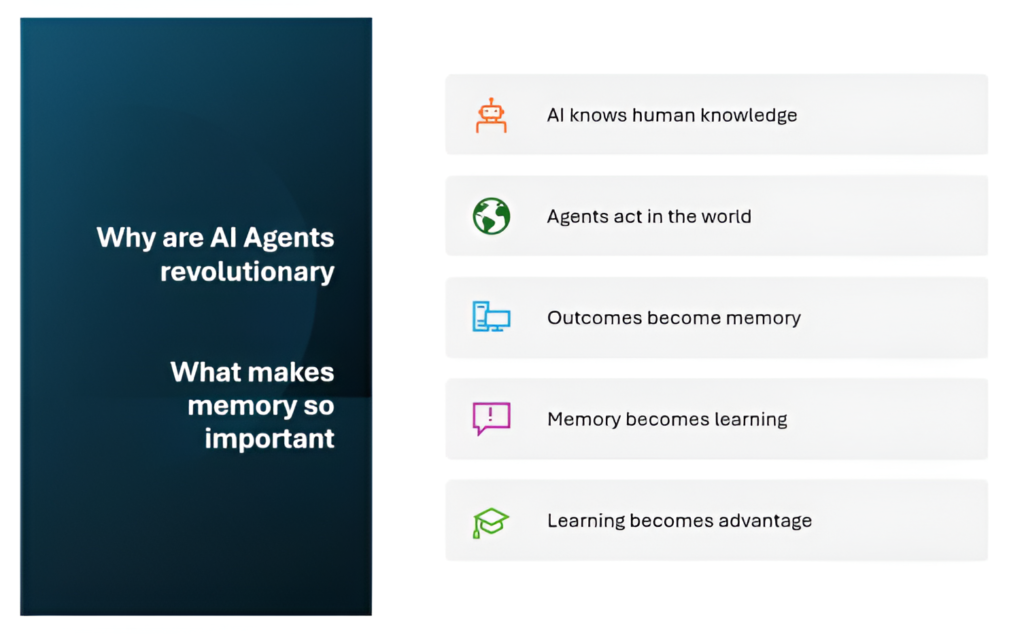

That is one of the reasons AI agents feel revolutionary. Traditional AI has largely reflected human knowledge as captured in text, documents, data, and code. It knew what people had recorded. Agents introduce a new dynamic: they can act, observe the result of that action, and retain the outcome as future guidance. Over time, that means they can build a form of operational knowledge, not just inherited knowledge.

This does not make human judgment less important. If anything, it makes human direction more important, because now we are designing systems that can carry lessons forward. The excitement is not that agents will make people irrelevant. The excitement is that software is beginning to develop continuity, and continuity is what turns isolated capability into durable capability.

What Agent Memory Really Is

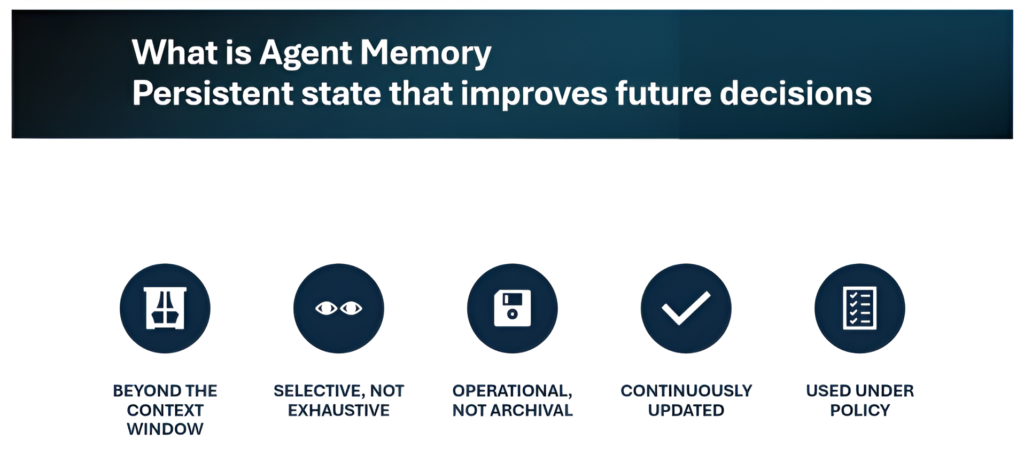

Agent memory is often described too loosely. Sometimes it is confused with a longer context window. Sometimes with chat history. Sometimes with saving embeddings in a vector database. Those things may contribute to memory, but none of them is the full concept.

The most useful definition is this:

Agent memory is persistent state that improves future decisions and actions.

That definition matters because it draws two important distinctions.

First, memory is not the same as context. Context is temporary. It exists inside the current interaction. Memory persists beyond it. It can influence future reasoning, future workflows, and future actions even after the original interaction has ended.

Second, memory is not the same as raw storage. Memory is not merely information kept somewhere. It is information retained because it has expected future value. A mature memory system is not a dump of everything the agent has seen. It is selective. It keeps what matters, ignores what does not, and retrieves only what is useful for the task at hand.

That point is easy to underestimate. In practice, the quality of an agent memory system is determined less by how much it stores and more by how intelligently it decides what deserves to live.

The Five Forms of Memory That Matter

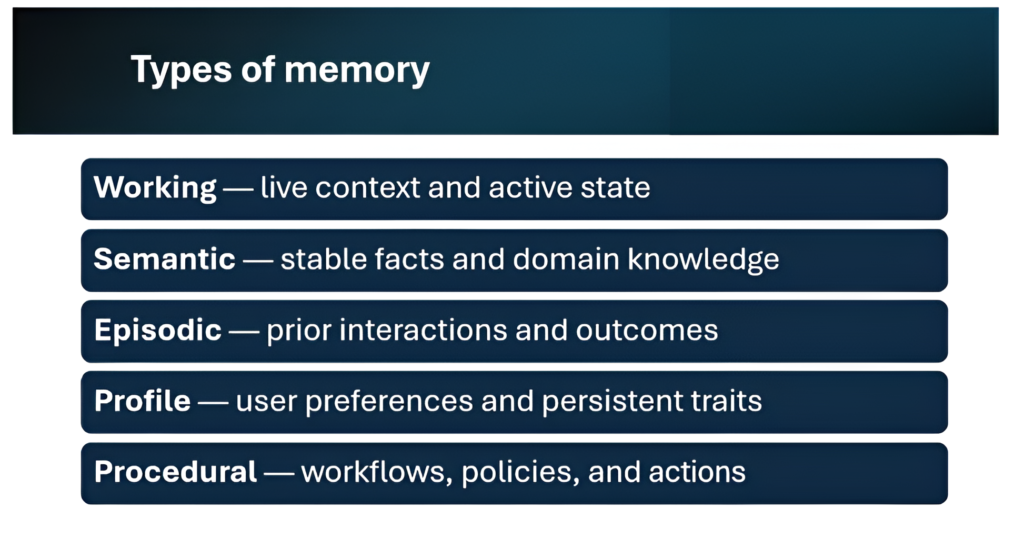

One reason discussions about memory become vague is that the word “memory” is often used as if it refers to one thing. In practice, an agent may need several distinct types of memory, each with different behavior and different architectural needs.

Working memory is the agent’s live, in-flight state. It includes what is happening right now: the active task, current goals, recent tool outputs, unresolved entities, and immediate reasoning context. It is dynamic and short-lived.

Semantic memory is stable knowledge. It includes facts, policies, domain definitions, product knowledge, business rules, and reference information. This is what the agent knows about the world or the enterprise.

Episodic memory is memory of prior interactions and outcomes. It captures what happened before: what the user asked, what was recommended, what succeeded, what failed, what was corrected, and what was escalated. This is where something like accumulated experience begins to emerge.

Profile memory captures persistent user- or entity-specific traits: preferences, recurring constraints, priorities, communication patterns, and other durable signals that shape personalization.

Procedural memory is knowledge of how to do things. It includes workflows, playbooks, action sequences, policy-driven processes, and repeated operational patterns. Procedural memory is what makes an agent feel less like a chatbot and more like a capable operator.

These are not just conceptual categories. They matter because they imply different design choices. Working memory may live close to runtime state. Profile memory may require stronger structure and privacy controls. Episodic memory may benefit from event traces and summaries. Semantic memory may need alignment with source-of-truth knowledge systems. Procedural memory may intersect with orchestration and workflow engines.

The phrase “agent memory” sounds singular. The reality is much more layered.

Why Agent Memory Will Be Different From Human Memory

It is tempting to think about AI memory purely through analogy with human memory. That analogy is useful up to a point, but it also has limits.

Human memory is extraordinarily powerful, yet deeply imperfect. We remember selectively. We forget details. We compress years of experience into intuition. We sometimes recall meaning without exact words, emotion without chronology, or pattern without precision. Our memory is adaptive, but it is also imprecise.

Agent memory will evolve differently.

It can be explicit where human memory is fuzzy. It can be timestamped where ours is approximate. It can be structured, queryable, shared, audited, versioned, and governed. Over time, it may become stronger than human memory in some dimensions: long-range recall, preservation of operational detail, exact relationship tracking, high-volume memory retrieval, and the ability to combine structured and unstructured context at scale.

That does not make it “better” in every sense. It makes it different. Human memory is deeply tied to meaning, emotion, judgment, and lived experience. Agent memory will be more mechanical, more engineered, and in many settings, more operationally precise. The significance of that should not be framed as something negative. It should be understood as part of the broader expansion of intelligent systems.

Just as databases did not replace human understanding but vastly expanded organizational memory, agent memory will expand what software can retain and apply. It will allow intelligent systems to carry context forward in ways that are more disciplined and more durable than anything conversational AI has done so far.

Memory Is Not a Store. It Is a Lifecycle

This is where the topic becomes technically serious.

A weak approach to agent memory is to think of it as a storage problem. Save the interaction somewhere, retrieve it later, and call that memory. That approach may work fordemos, but it breaks down quickly in real.

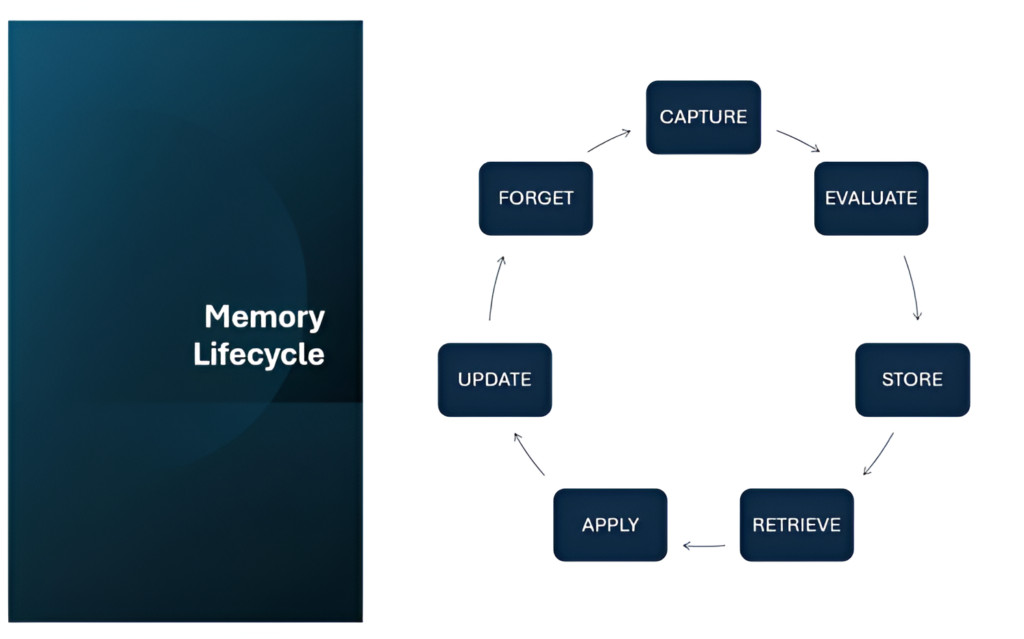

Memory is better understood as a lifecycle.

Something happens. The system captures a signal. It decides whether the signal deserves persistence. It assesses what type of memory it is. It stores it in the right form. Later, it retrieves that memory when needed. It applies it to shape reasoning or action. Then it may update, consolidate, reinforce, invalidate, or forget that memory over time.

That lifecycle is where memory becomes difficult.

If everything is stored, the system accumulates noise. If too little is stored, it loses continuity. If memory is not updated, it becomes stale. If contradictions are not handled, the agent becomes inconsistent. If nothing is forgotten, memory quality erodes. If governance is weak, the memory layer becomes risky.

This is why memory should be treated as a subsystem, not a feature. Its quality depends not on one storage technology, but on the discipline applied across its full lifecycle.

The Hard Problems in Agent Memory

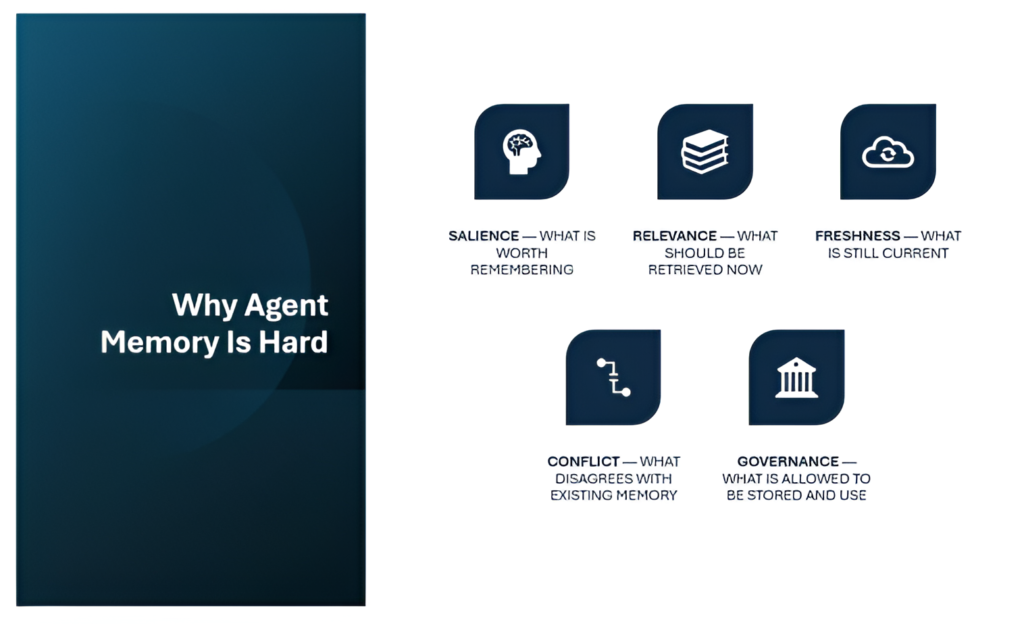

The hardest memory problems are not abstract. They are very practical.

The first is deciding what is worth remembering. Most interactions contain a mix of trivial, temporary, and meaningful signals. The challenge is not capacity. It is judgment. A memory system must determine what is likely to matter again.

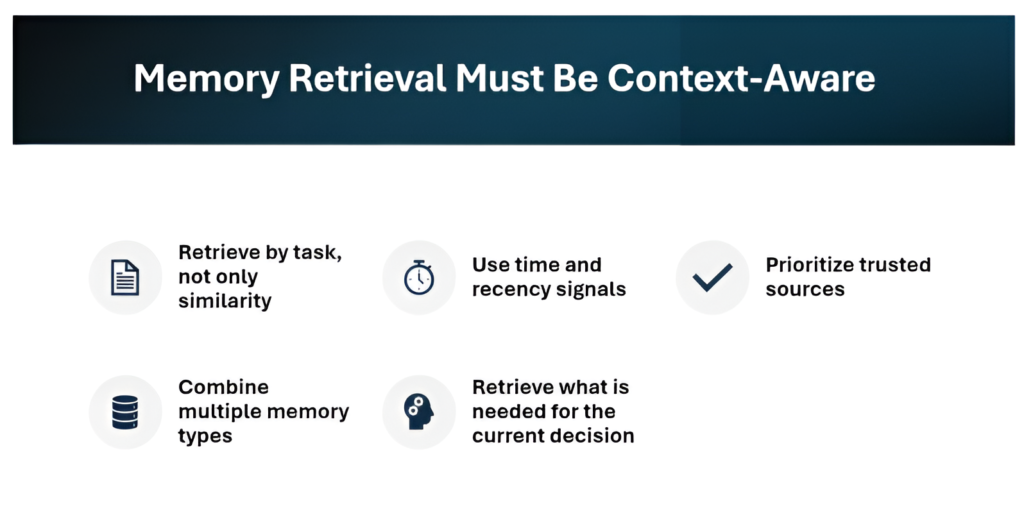

The second is deciding what should be retrieved now. Retrieval is often treated too simplistically. Similarity search is useful, but the memory that is most semantically similar is not always the memory that is most relevant to the current decision. Good retrieval must consider task intent, recency, trust, workflow stage, and context.

The third is freshness. A remembered fact may no longer be true. Preferences change. Rules evolve. Prior outcomes may no longer be predictive. Memory that was once useful can become misleading.

The fourth is conflict. Older and newer memories may disagree. A user may have changed their mind. A process may have been updated. A stored memory may have been inferred incorrectly in the first place. The system needs a way to handle contradiction without collapsing into confusion.

The fifth is governance. What can be stored? For how long? For what purpose? Under what access controls? Can it be deleted? Can it be audited? This is where memory becomes an enterprise concern, not just an engineering one.

All of these challenges point to the same conclusion: remembering well is much harder than remembering more.

How the Memory Problem Should Be Solve

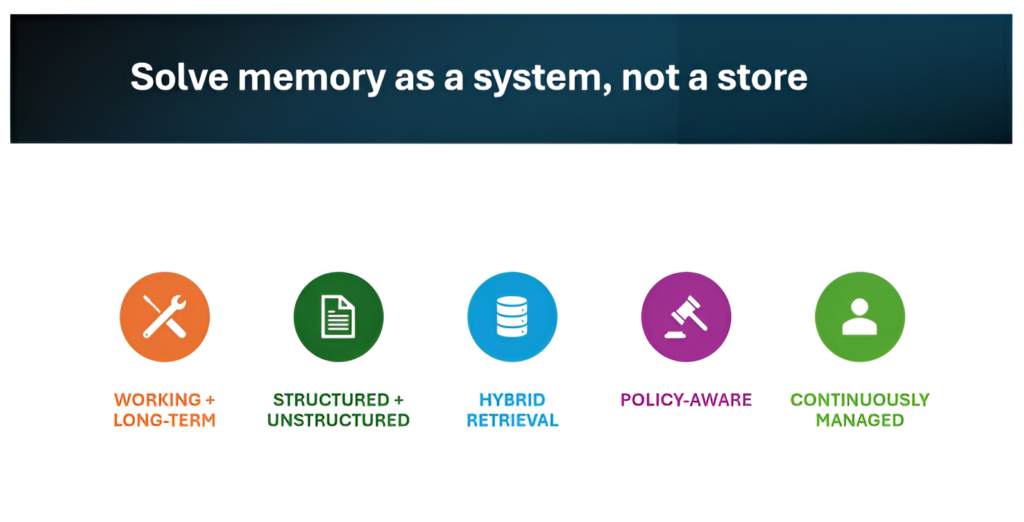

A strong memory system is not built by adding one database or one framework. It is built by making disciplined design choices.

The first is to separate working memory from long-term memory. The state needed for the current interaction should not be treated the same way as durable memory retained across time.

The second is to use multiple forms of representation. Some memory should be structured. Some should be unstructured. Some should be retrievable semantically. Some should be relational. A preference, a prior interaction summary, and a workflow pattern are not the same kind of object and should not be handled identically.

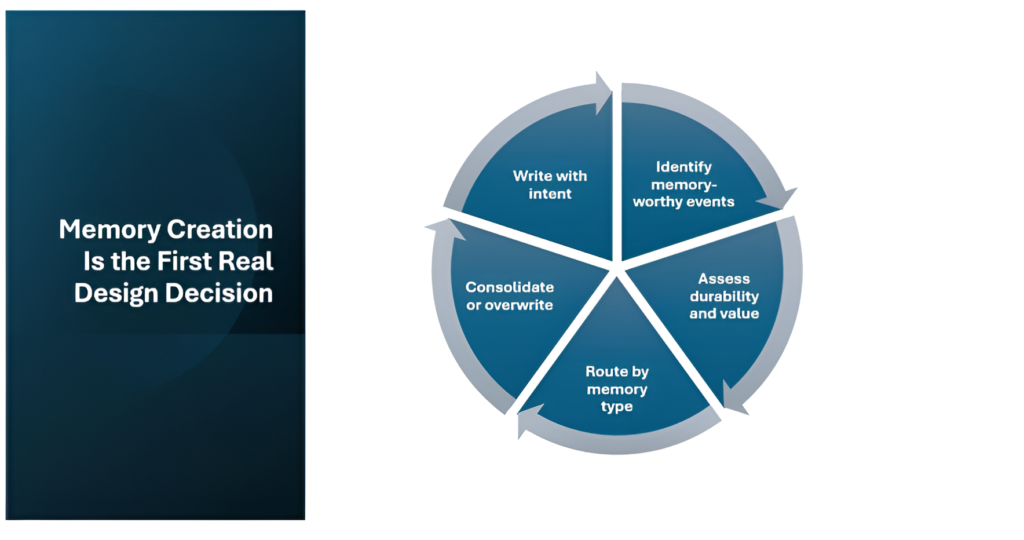

The third is to make memory creation selective. This is one of the most overlooked design areas. Memory should not be a byproduct of logging everything. It should be a controlled process that captures the right signals, judges what is worth keeping, classifies the memory type, merges or overwrites intelligently, and persists with intent.

The fourth is to make retrieval context-aware. Memory retrieval should be shaped by the current task, current decision, time, trust, and scope. The goal is not just to retrieve similar information. It is to retrieve what is genuinely useful for the present moment.

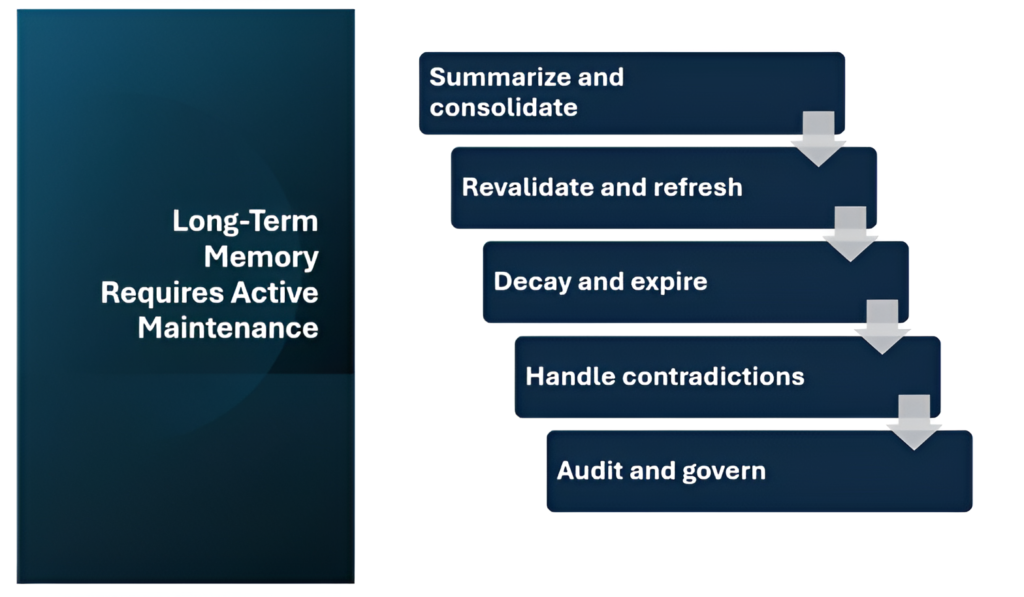

The fifth is to treat maintenance as part of the design. High-quality memory requires summarization, consolidation, revalidation, expiry, contradiction handling, and forgetting. Long-term quality does not emerge automatically. It is maintained.

When looked at this way, memory becomes one of the most sophisticated parts of agent architecture. It sits at the intersection of reasoning, personalization, workflow execution, data design, governance, and system quality.

The Role of the Tooling Stack

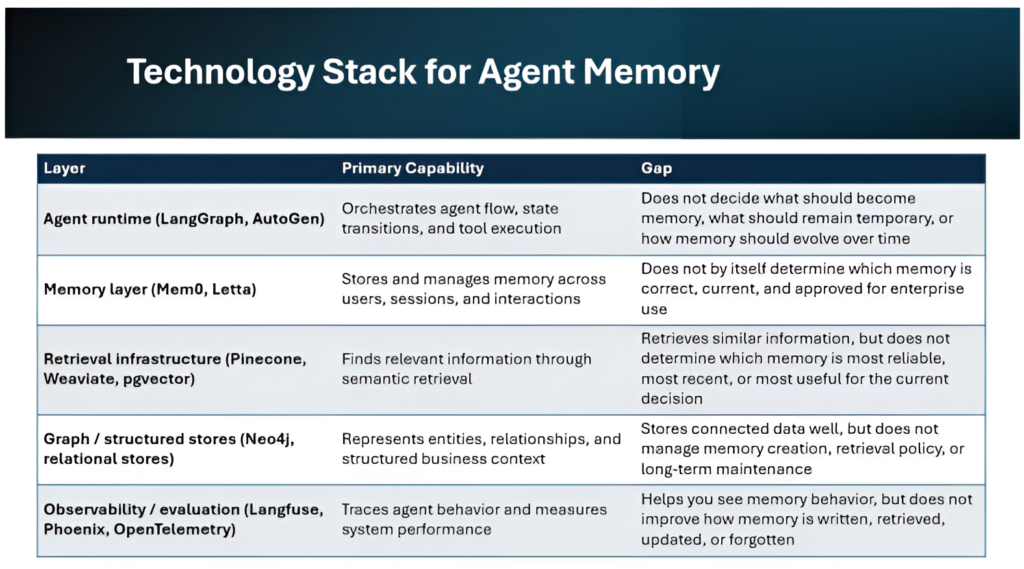

There is now a growing ecosystem of technologies relevant to agent memory. Runtimes help orchestrate state, tool use, and long-running execution. Memory layers provide persistence across users and sessions. Retrieval infrastructure supports semantic recall. Graph and structured stores help model entities and relationships. Observability platforms help teams trace behavior and evaluate whether memory actually improved outcomes.

These tools are all useful. But they do not, by themselves, solve the problem.

The tooling stack provides capabilities. The memory system provides logic.

That distinction matters because many organizations will assume that adopting a memory framework or a vector store is equivalent to building memory. It is not. The more important questions remain: what becomes memory, what stays temporary, how memory is governed, how it evolves over time, and how it changes the quality of action.

This is also why memory is likely to remain one of the most enterprise owned parts of the agent stack. Base models will come from a small number of powerful providers. Orchestration frameworks will mature. Tool ecosystems will standardize. But memory policy, business-specific retained state, organization-specific action patterns, and the governance of persistent context will remain highly local problems.

That makes memory strategically important.

How Memory and Enterprise Data Come Together

One of the most important architectural clarifications is that agent memory does not replace the data lake, warehouse, or lakehouse.

Enterprise data platforms remain the place where large-scale historical, transactional, analytical, and governed business data lives. Agents will absolutely need that data. But the role of memory is different.

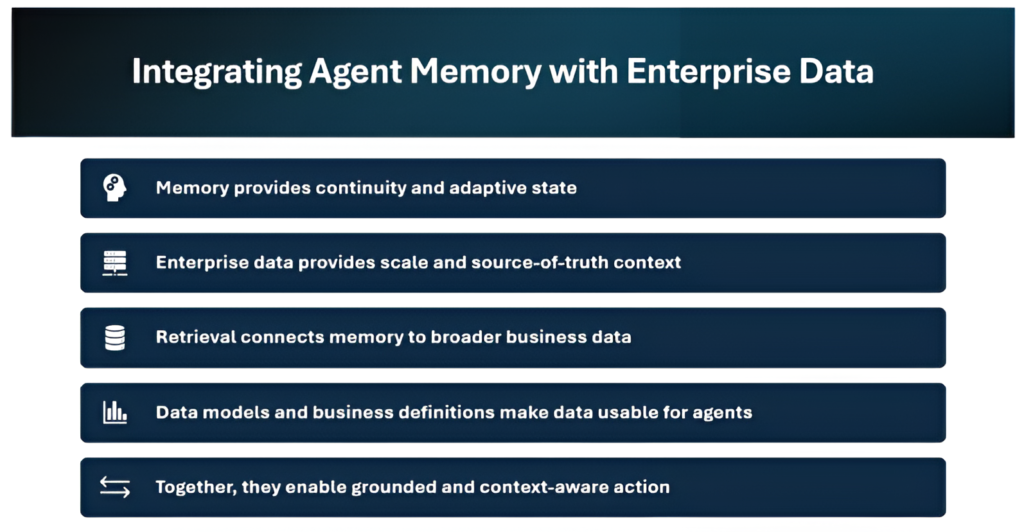

Memory provides continuity and adaptive state. Enterprise data provides scale, history, and source-of-truth depth. Retrieval connects the two. Data models and business definitions make that data interpretable and usable for the agent.

This is a powerful combination. It allows the agent to retain what matters for future action without turning memory into a copy of the entire enterprise record. The agent can remember a preference, an outcome, a workflow state, or a compact lesson from prior interaction, while still grounding itself in broader enterprise truth when needed.

In that sense, the future is not memory versus data platform. It is memory layered on top of enterprise data engineering , with retrieval and business context bridging the gap.

The Future of Agent Memory

The direction of travel is becoming clearer.

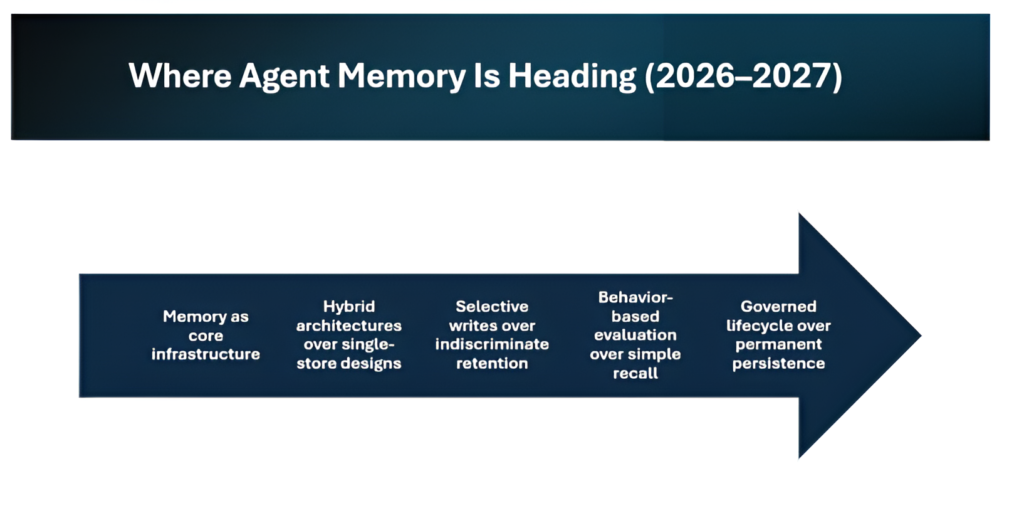

Memory is moving from feature to subsystem. From vector-only approaches to hybrid architectures. From passive storage to selective memory formation. From static retrieval tests to behavior-level evaluation. From indefinite persistence to governed lifecycle and forgetting.

That trajectory is significant because it means the industry is beginning to understand what memory actually is. Not a convenience feature, but a control system for useful intelligence over time.

If the first phase of AI was about generating answers, the next phase may be about accumulating context. Agents will not simply know what humanity has written. They will increasingly know what has been learned through use, through interaction, through repeated workflows, through observed outcomes, and through structured retention of what matters.

That is an exciting future.

The systems that matter most may not be the ones that merely produce the most fluent response in a single turn. They may be the ones that remember with the greatest discipline, retrieve with the greatest precision, adapt with the greatest continuity, and forget with the greatest intelligence.

Closing Thought

For all the attention given to models, prompts, and interfaces, memory may become the quiet technology that matters most.

- Not because it is flashy.

- Not because it is easy.

- But because it is the layer that allows intelligence to persist, evolve, and compound.

That is what makes agent memory so important. It is not merely another technical layer in agent architecture. It is the foundation that can turn momentary intelligence into lasting capability , allowing agents not just to respond well once, but to improve, adapt, and become more valuable over time.

Related Searches

- Agentic AI for DevOps: Smarter, Autonomous and Human Centric Workflows

- How Agentic AI is Transforming DevSecOps: From Reactive Security to Proactive Defense

- Amazon Nova Act Explained: How Action-Oriented AI Is Transforming Enterprise Automation