What without Internet?

How to implement Ftp satellite server

Pre-requisites

A blog site on our Real life experiences with various phases of DevOps starting from VCS, Build & Release, CI/CD, Cloud, Monitoring, Containerization.

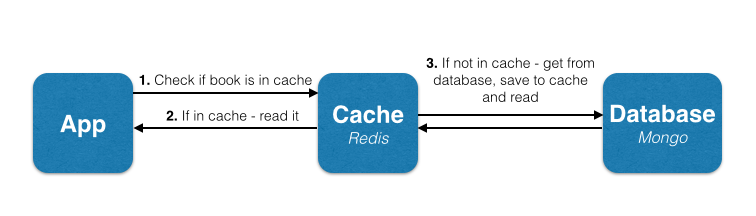

Sometimes getting data from disks can be time-consuming. In order to increase the performance, we can put the requests those either need to be served first or rapidly in Redis memory and then the Redis service there will keep rest of the data in the main database. So the whole architecture will look like this:-

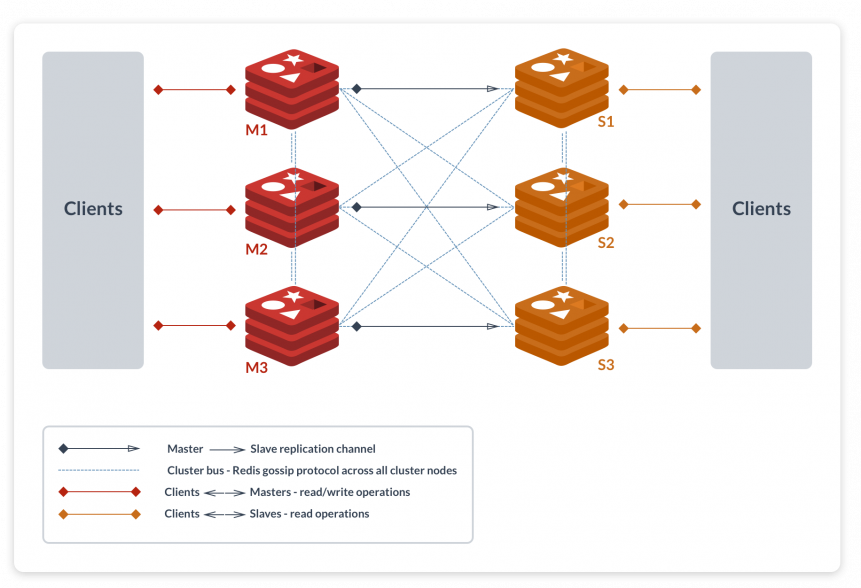

Beginning with the explanation about Redis Master-Slave. In this phenomenon, Redis can replicate data to any number of nodes. ie. it lets the slave have the exact copy of their master. This helps in performance optimizations.

I bet now you can use Redis as a Database.

A Redis cluster is simply a data sharding strategy. It automatically partitions data across multiple Redis nodes. It is an advanced feature of Redis which achieves distributed storage and prevents a single point of failure.

Replication is also known as mirroring of data. In replication, all the data get copied from the master node to the slave node.

Sharding is also known as partitioning. It splits up the data by the key to multiple nodes.

As shown in the above figure, all keys 1, 2, 3, 4 are getting stored on both machine A and B.

In sharding, the keys are getting distributed across both machine A and B. That is, the machine A will hold the 1, 3 key and machine B will hold 2, 4 key.

Unfortunately, redis doesn’t have a direct way of migrating data from Redis-Master Slave to Redis Cluster. Let me explain it to you why?

We can start Redis service in either cluster mode or standalone mode. Now your solution would be that we can change the Redis Configuration value on-fly(means without restarting the Redis Service) with redis-cli. Yes, you are absolutely correct we can change the Redis configuration on-fly but unfortunately, Redis Mode(cluster or standalone) can’t be decided on-fly, for that we have to restart the service.

I guess now you guys will understand my situation :).

For migration, there are multiple ways of doing it. However, we needed to migrate the data without downtime or any interruptions to the service.

We decided the best course of action was a steps process:-

I know the all steps are easy except the second step. Fortunately, redis provides a method of key scanning through which we can scan all the key and take a dump of it and then restore it in the new Redis Server.

To achieve this I have created a python utility in which you have to define the connection details of your old Redis Server and new Redis Server.

You can find the utility here.

https://github.com/opstree/redis-migration

I have provided the detail information on using this utility in the README file itself. I guess my experience will help you guys while redis migration.

I know most people have a query that when should we use replication and when clustering :).

If you have more data than RAM in a single machine, use Redis Cluster to shard the data across multiple databases.

If you have less data than RAM in a machine, set up a master-slave replication with sentinel in front to handle the fai-lover.

The main idea of writing this blog was to spread information about Replication and Sharding mechanism and how to choose the right one and if mistakenly you have chosen the wrong one, how to migrate it from :).

There are multiple factors yet to be explored to enhance the flow of migration if you find that before I do, please let me know to improve this blog.

I hope I explained everything and clear enough to understand.

Thanks for reading. I’d really appreciate any and all feedback, please leave your comment below if you guys have some feedbacks.

Happy Coding!!!!

Initially the script reference from taken here

https://gist.github.com/aniketsupertramp/1ede2071aea3257f9bb6be1d766a88f

Well, as a DevOps; I like to play around with shell scripts and shell commands especially on a remote system as it just adds some level of fun in it. But what’s more thrilling than running shell scripts and command on the remote server, making them return the dynamic web pages or JSON from that remote system.

Yes for most of us it comes as a surprise that just like PHP, JSP, ASP shell scripts can also return us dynamic web pages but, as long time ago a wise man said: “where there is a shell there is a way”.

$ cd /etc/apache2/mods-enabled $ sudo ln -s ../mods-available/cgi.load

$ cd /usr/lib/cgi-bin

$ vim hello.sh

#!/bin/bash

echo "Content-type: text/html"

echo ""

echo "hello world! from shell script"

$ sudo systemctl restart apache2.service

<!doctype html>

<html lang="en">

<head>

<!-- Required meta tags -->

<meta charset="utf-8">

<meta name="viewport" content="width=device-width, initial-scale=1, shrink-to-fit=no">

<!-- Bootstrap CSS -->

<link rel="stylesheet" href="https://stackpath.bootstrapcdn.com/bootstrap/4.3.1/css/bootstrap.min.css" integrity="sha384-ggOyR0iXCbMQv3Xipma34MD+dH/1fQ784/j6cY/iJTQUOhcWr7x9JvoRxT2MZw1T" crossorigin="anonymous">

<title>Hello, world!</title>

</head>

<body>

<h1>All the user using /usr/sbin/nologin shell</h1>

<table class="table">

<thead>

<tr>

<th scope="col">Name</th>

<th scope="col">User Id</th>

<th scope="col">Group Id</th>

</tr>

</thead>

<tbody>

</tbody>

</table>

<!-- Optional JavaScript -->

<!-- jQuery first, then Popper.js, then Bootstrap JS -->

https://code.jquery.com/jquery-3.3.1.slim.min.js

https://cdnjs.cloudflare.com/ajax/libs/popper.js/1.14.7/umd/popper.min.js

https://stackpath.bootstrapcdn.com/bootstrap/4.3.1/js/bootstrap.min.js

</body>

</html>

hello.sh

#!/bin/bash

echo "Content-type: text/html"

echo ""

cat header

cat /etc/passwd | awk -F ':' '{if($7 == "/usr/sbin/nologin"){print ""$1""$3""$4""}}'

cat footer

|

{

“variables”: {

“ami_id”: “ami-0a574895390037a62”, “app_name”: “httpd” }, “builders”: [{ “type”: “amazon-ebs”, “region”: “ap-south-1”, “vpc_id”: “vpc-df95d4b7”, “subnet_id”: “subnet-175b2d7f”, “source_ami”: “{{user `ami_id`}}”, “instance_type”: “t2.micro”, “ssh_username”: “ubuntu”, “ami_name”: “PACKER-DEMO-{{user `app_name` }}”, “tags”: { “Name”: “PACKER-DEMO-{{user `app_name` }}”, “Env”: “DEMO” } “provisioners”: [ }

|

|

#!/bin/bash sudo apt-get update

|

|

packer validate httpd.json

|

|

packer build httpd.json

|

|

==> amazon-ebs: Prevalidating AMI Name: PACKER-DEMO-httpd

amazon-ebs: Found Image ID: ami-0a574895390037a62 ==> amazon-ebs: Creating temporary keypair: packer_5cd559df-84ce-ff8a-fa93-0c4477d988e4 ==> amazon-ebs: Creating temporary security group for this instance: packer_5cd559e2-ea81-be15-b94a-c28493c0d3ff ==> amazon-ebs: Authorizing access to port 22 from [0.0.0.0/0] in the temporary security groups… ==> amazon-ebs: Launching a source AWS instance… ==> amazon-ebs: Adding tags to source instance amazon-ebs: Adding tag: “Name”: “Packer Builder” amazon-ebs: Instance ID: i-06ed051a3435865c4 ==> amazon-ebs: Waiting for instance (i-06ed051a3435865c4) to become ready… ==> amazon-ebs: Using ssh communicator to connect: *.*.*.* ==> amazon-ebs: Waiting for SSH to become available… ==> amazon-ebs: Connected to SSH! ==> amazon-ebs: Stopping the source instance… amazon-ebs: Stopping instance ==> amazon-ebs: Waiting for the instance to stop… ==> amazon-ebs: Creating AMI PACKER-DEMO-httpd from instance i-06ed051a3435865c4 amazon-ebs: AMI: ami-0ce41081a3b649374 ==> amazon-ebs: Waiting for AMI to become ready… ==> amazon-ebs: Adding tags to AMI (ami——)… ==> amazon-ebs: Tagging snapshot: snap-0ee3ce80ec289ed24 ==> amazon-ebs: Creating AMI tags amazon-ebs: Adding tag: “Name”: “PACKER-DEMO-httpd” amazon-ebs: Adding tag: “Env”: “DEMO” ==> amazon-ebs: Creating snapshot tags ==> amazon-ebs: Terminating the source AWS instance… ==> amazon-ebs: Cleaning up any extra volumes… ==> amazon-ebs: No volumes to clean up, skipping ==> amazon-ebs: Deleting temporary security group… ==> amazon-ebs: Deleting temporary keypair… Build ‘amazon-ebs’ finished.==> Builds finished. The artifacts of successful builds are: –> amazon-ebs: AMIs were created: ap-south-1: ami——————–

|

Packer is an opensource tool developed by HashiCorp to create machine images for multiple cloud platforms like AWS, GCP, Azure or even VMWare. As the name suggests it packs all your software, packages, configurations while baking your machine images. Perhaps Packer is the only tool right now in the market which solely focuses on creating machine images and giving us the ability to automate the machine image creation process.

In this blog post, we will learn What Packer does and how it does things. Sounds Interesting!!!!

Hope this blog helps you understand the basics of Packer. Having covered all the basics understanding, we can now “Get Started With Packer”.