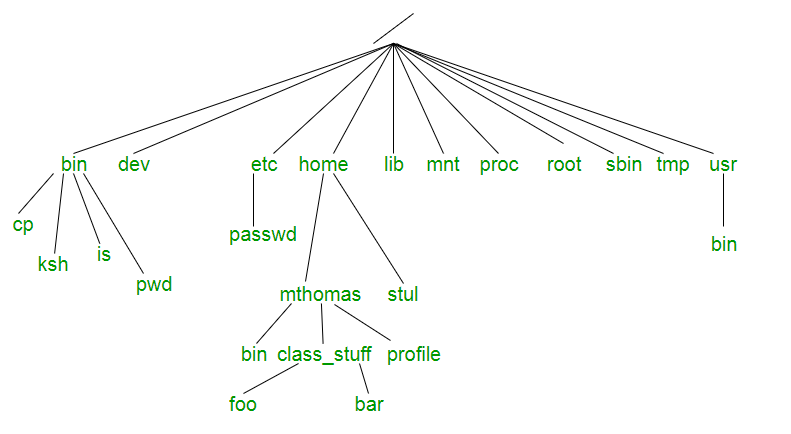

For those who have surfed straight to this blog, please check out the previous part of this series Unix File Tree Part-1 and those who have stayed tuned for this part, welcome back.In the previous part, we discussed the philosophy and the need for file tree. In this part, we will dive deep into the significance of each directory.

Dayum!! that’s a lot of stuff to gulp at once, we’ll kick out things one after the other.

Major directories

Let’s talk about the crucial directories which play a major role.

- /bin: When we started crawling on Linux this helped us to get on our feet yes, you read it right whether you want to copy any file, move it somewhere, create a directory, find out date, size of a file, all sorts of basic operations without which the OS won’t even listen to you (Linux yawning meanwhile) happens because of the executables present in this directory. Most of the programs in /bin are in binary format, having been created by a C compiler, but some are shell scripts in modern systems.

- /etc: When you want things to behave the way you want, you go to /etc and put all your desired configuration there (Imagine if your girlfriend has an /etc life would have been easier). whether it is about various services or daemons running on your OS it will make sure things are working the way you want them to.

- /var: He is the guy who has kept an eye over everything since the time you have booted the system (consider him like Heimdall from Thor). It contains files to which the system writes data during the course of its operation. Among the various sub-directories within /var are /var/cache (contains cached data from application programs), /var/games(contains variable data relating to games in /usr), /var/lib (contains dynamic data libraries and files), /var/lock (contains lock files created by programs to indicate that they are using a particular file or device), /var/log (contains log files), /var/run (contains PIDs and other system information that is valid until the system is booted again) and /var/spool (contains mail, news and printer queues).

- /proc: You can think of /proc just like thoughts in your brain which are illusions and virtual. Being an illusionary file system it does not exist on disk instead, the kernel creates it in memory. It is used to provide information about the system (originally about processes, hence the name). If you navigate to /proc The first thing that you will notice is that there are some familiar-sounding files, and then a whole bunch of numbered directories. The numbered directories represent processes, better known as PIDs, and within them, a command that occupies them. The files contain system information such as memory (meminfo), CPU information (cpuinfo), and available filesystems.

- /opt: It is like a guest room in your house where the guest stayed for prolong period and became part of your home. This directory is reserved for all the software and add-on packages that are not part of the default installation.

- /usr: In the original Unix implementations, /usr was where the home directories of the users were placed (that is to say, /usr/someone was then the directory now known as /home/someone). In current Unixes, /usr is where user-land programs and data (as opposed to ‘system land’ programs and data) are. The name hasn’t changed, but its meaning has narrowed and lengthened from “everything user related” to “user usable programs and data”. As such, some people may now refer to this directory as meaning ‘User System Resources’ and not ‘user’ as was originally intended.

Potato or Potaaato what is the difference?

We’ll be discussing those directories which confuse us always, which have almost a similar purpose but still are in separate locations and when asked about them we go like ummmm…….

/bin vs /usr/bin vs /sbin vs /usr/local/bin

This might get almost clear out when I explained the significance of /usr in the above paragraph. Since Unix designers planned /usr to be the local directories of individual users so it contained all of the sub-directories like /usr/bin, /usr/sbin, /usr/local/bin. But the question remains the same how the content is different?

/usr/bin:

- /usr/bin is a standard directory on Unix-like operating systems that contains most of the executable files that are not needed for booting or repairing the system.

- A few of the most commonly used are awk, clear, diff, du, env, file, find, free, gzip, less, locate, man, sudo, tail, telnet, time, top, vim, wc, which, and zip.

/usr/sbin:

- The /usr/sbin directory contains non-vital system utilities that are used after booting.

- This is in contrast to the /sbin directory, whose contents include vital system utilities that are necessary before the /usr directory has been mounted (i.e., attached logically to the main filesystem).

- A few of the more familiar programs in /usr/sbin are adduser, chroot, groupadd, and userdel.

- It also contains some daemons, which are programs that run silently in the background, rather than under the direct control of a user, waiting until they are activated by a particular event or condition such as crond and sshd.

I hope I have covered most of the directories which you might come across frequently and your questions must have been answered.

Now that we know about the significance of each UNIX directory, It’s time to use them wisely the way they are supposed to be.

Please feel free to reach me out for any suggestions.

Goodbye till next time!